Getting started

Getting started

Exploring and using data

Exploring and using data

Exploring catalogs and datasets

Exploring a catalog of datasets

What's in a dataset

Filtering data within a dataset

An introduction to the Explore API

An introduction to the Automation API

Introduction to the WFS API

Downloading a dataset

Search your data with AI (vector search)

The Explore data with AI feature

Creating maps and charts

Creating advanced charts with the Charts tool

Overview of the Maps interface

Configure your map

Manage your maps

Reorder and group layers in a map

Creating multi-layer maps

Share your map

Navigating maps made with the Maps interface

Rename and save a map

Creating pages with the Code editor

How to limit who can see your visualizations

Archiving a page

Managing a page's security

Creating a page with the Code editor

Content pages: ideas, tips & resources

How to insert internal links on a page or create a table of contents

Sharing and embedding a content page

How to troubleshoot maps that are not loading correctly

Creating content with Studio

Creating content with Studio

Adding a page

Publishing a page

Editing the page layout

Configuring blocks

Previewing a page

Adding text

Adding a chart

Adding an image block to a Studio page

Adding a map block in Studio

Adding a choropleth map block in Studio

Adding a points of interest map block in Studio

Adding a key performance indicator (KPI)

Configuring page information

Using filters to enhance your pages

Refining data

Managing page access

How to edit the url of a Studio page

Embedding a Studio page in a CMS

Visualizations

Managing saved visualizations

Configuring the calendar visualization

The basics of dataset visualizations

Configuring the images visualization

Configuring the custom view

Configuring the table visualization

Configuring the map visualization

Understanding automatic clustering in maps

Configuring the analyze visualization

Publishing data

Publishing data

Publishing datasets

Creating a dataset

Creating a dataset from a local file

Creating a dataset with multiple files

Creating a dataset from a remote source (URL, API, FTP)

Creating a dataset using dedicated connectors

Creating a dataset with media files

Federating an Opendatasoft dataset

Publishing a dataset

Publishing data from a CSV file

Publishing data in JSON format

Supported file formats

Promote mobility data thanks to GTFS and other formats

What is updated when publishing a remote file?

Configuring datasets

Automated removal of records

Configuring dataset export

Checking dataset history

Configuring the tooltip

Dataset actions and statuses

Dataset limits

Defining a dataset schema

How Opendatasoft manages dates

How and where Opendatasoft handles timezones

How to find your workspace's IP address

Keeping data up to date

Processing data

Translating a dataset

How to configure an HTTP connection to the France Travail API

Deciding what license is best for your dataset

Types of source files

OpenStreetMap files

Shapefiles

JSON files

XML files

Spreadsheet files

RDF files

CSV files

MapInfo files

GeoJSON files

KML/KMZ files

GeoPackage

Connectors

Saving and sharing connections

Airtable connector

Amazon S3 connector

ArcGIS connector

Azure Blob storage connector

Database connectors

Dataset of datasets (workspace) connector

Eco Counter connector

Feed connector

Google BigQuery connector

Google Drive connector

How to find the Open Agenda API Key and the Open Agenda URL

JCDecaux connector

Netatmo connector

OpenAgenda connector

Realtime connector

Salesforce connector

SharePoint connector

U.S. Census connector

WFS connector

Databricks connector

Connecteur Waze

Harvesters

Harvesting a catalog

ArcGIS harvester

ArcGIS Hub Portals harvester

CKAN harvester

CSW harvester

FTP with meta CSV harvester

Opendatasoft Federation harvester

Quandl harvester

Socrata harvester

data.gouv.fr harvester

data.json harvester

Processors

What is a processor and how to use one

Add a field processor

Compute geo distance processor

Concatenate text processor

Convert degrees processor

Copy a field processor

Correct geo shape processor

Create geo point processor

Decode HTML entities processor

Decode a Google polyline processor

Deduplicate multivalued fields processor

Delete record processor

Expand JSON array processor

Expand multivalued field processor

Expression processor

Extract HTML processor

Extract URLs processor

Extract bit range processor

Extract from JSON processor

Extract text processor

File processor

GeoHash to GeoJSON processor

GeoJoin processor

Geocode with ArcGIS processor

Geocode with BAN processor (France)

Geocode with PDOK processor

Geocode with the Census Bureau processor (United States)

Geomasking processor

Get coordinates from a three-word address processor

IP address to geo Coordinates processor

JSON array to multivalued processor

Join datasets processor

Meta expression processor

Nominatim geocoder processor

Normalize Projection Reference processor

Normalize URL processor

Normalize Unicode values processor

Normalize date processor

Polygon filtering processor

Replace text processor

Replace via regular expression processor

Retrieve Administrative Divisions processor

Set timezone processor

Simplify Geo Shape processor

Skip records processor

Split text processor

Transform boolean columns to multivalued field processor

Transpose columns to rows processor

WKT and WKB to GeoJson processor

what3words processor

Data Collection Form

About the Data Collection Form feature

Data Collection Forms associated with your Opendatasoft workspace

Create and manage your data collection forms

Sharing and moderating your data collection forms

Dataset metadata

Analyzing how your data is used

Getting involved: Sharing, Reusing and Reacting

Discovering & submitting data reuses

Sharing through social networks

Commenting via Disqus

Submitting feedback

Following dataset updates

Sharing and embedding data visualizations

Monitoring usage

An overview of monitoring your workspaces

Analyzing user activity

Analyzing actions

Detail about specific fields in the ods-api-monitoring dataset

How to count a dataset's downloads over a specific period

Analyzing data usage

Analyzing a single dataset with its monitoring dashboard

Analyzing back office activity

Using the data lineage feature

Managing your users

Managing your users

Managing limits

Managing users

Managing users

Setting quotas for individual users

Managing access requests

Inviting users to the portal

Managing workspaces

Managing your portal

Managing your portal

Configuring your portal

Configure catalog and dataset pages

Configuring a shared catalog

Sharing, reusing, communicating

Customizing your workspace's URL

Managing legal information

Connect Google Analytics (GA4)

Regional settings

Pictograms reference

Managing tracking

Best practices for search engine optimization (SEO)

Look & Feel

Branding your portal

Customizing portal themes

How to customize my portal according to the current language

Managing the dataset themes

Configuring data visualizations

Configuring the navigation

Adding IGN basemaps

Adding images and fonts

Plans and quotas

Managing security

Configuring your portal's overall security policies

A dataset's Security tab

Mapping your directory to groups in Opendatasoft (with SSO)

Single sign-on with OpenID Connect

Single sign-on with SAML

Parameters

- Home

- Managing your portal

- Managing security

- A dataset's Security tab

A dataset's Security tab

Updated

by Patrick Smith

Access and permissions can be specified for any individual dataset.

To do this, go to its Security tab, where you will find several available tools: You can apply a filter to control what fields in a dataset are visible in the portal. You can also limit the number of API calls allowed on the dataset, and especially you can provide access and permissions specific users and groups (and not to others).

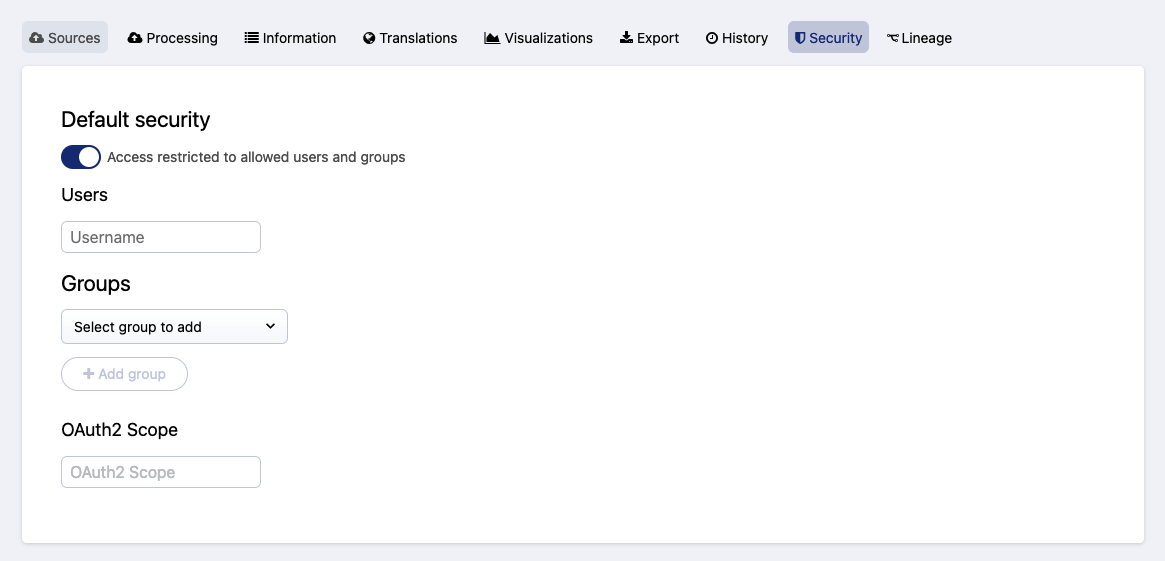

Default security toggle

To restrict access to the dataset you're editing, simply activate the "Default security" toggle.

Once activated, you are able to manage access and permissions to this dataset, both by user and by group.

Note that if you restrict access but do not specify any users or groups, only your workspace's admins will have access to this dataset.

To change this default behavior, in your back office go to Configuration > Security. There, under "Make new datasets private by default," activate the toggle.

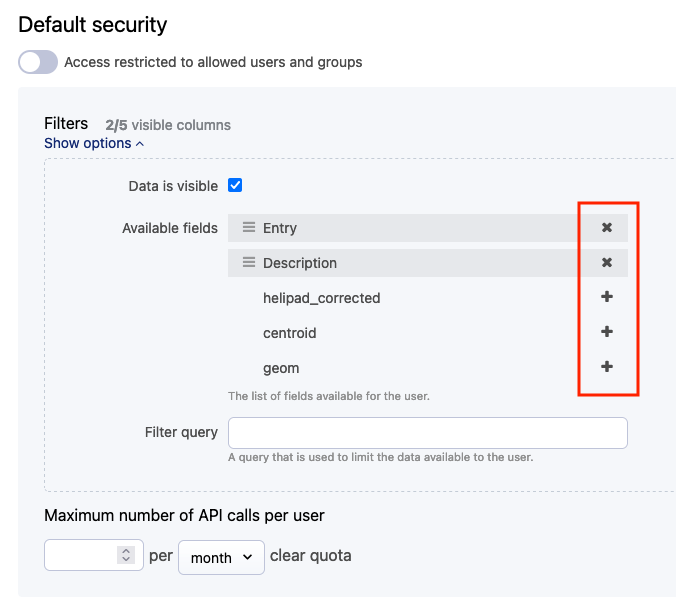

Filtering visible fields, records, and API call limits for all users

Even without restricting the dataset to specific users or groups, you can still limit which of this dataset's fields are visible in the portal, as well as limit the number of API calls any one user can make to that dataset.

Click on Show options under "Filters" to filter which fields (or columns) are visible in the portal. Click on the "x" or "+" icons to add and remove fields from those that will be visible to your users.

You can also use the same query language used to search within a dataset to limit which records (or rows) are visible.

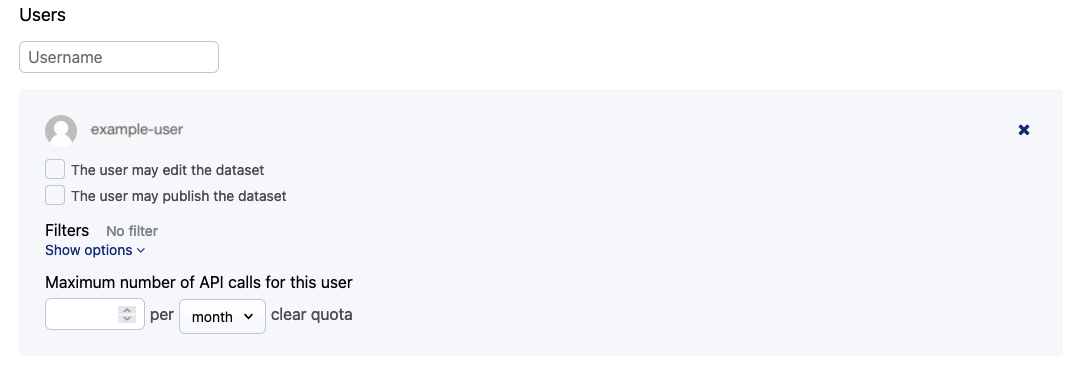

Adding permissions by user

Once you have restricted access to a dataset, you can allow specific users access to it.

Simply begin typing their identifier into the "Users" field, and Opendatasoft will suggest the list of your users starting with those letters. Click on a user to add them to the dataset.

Once they're added, you can select if they are permitted to edit and publish the dataset, and you can limit the number of API calls this user is allowed to perform.

The same options are available here to filter which fields and records (columns and rows) are visible in your portal. Remember, however, that anyone with the right to edit the dataset will still be able to see the full dataset in their back office.

Adding permissions by group

Once you have restricted access to a dataset, you can allow access to specific groups of users.

Click on Select group to add and select the group from the list. Note that once you've added a group, its members are listed on the right.

Then you can select if that group is permitted to edit and publish the dataset, and you can limit the number of API calls any single member of the group is allowed to perform.

The same options are available here to filter which fields and records (columns and rows) are visible in your portal. Remember, however, that anyone with the right to edit the dataset will still be able to see the full dataset in their back office.

OAuth2 Scope

Opendatasoft has implemented the OAuth2 authorization flow. See here, and especially our Explore API documentation for more information.

This field is where you specify the scope, which is what defines who can access the dataset using OAuth2.